A NJ community is trying to protect their kids after AI-generated fake nude images of real students spread around a school.

MORE: https://t.co/twmUinxK3A #MorningInAmerica pic.twitter.com/E7ce0uEWdI

— NewsNation (@NewsNation) November 3, 2023

WHERE IS THE CRIME???

This is an absolute outrage.

You can’t regulate AI, faggots. You cannot do it. If someone generates a nude photo of an underage girl, that is not “child porn,” it is a digitally generated image, no different than any other.

This whole discussion is retarded. The AI generates weird stuff all the time. Even censored AI generates sick looking crap. I’ve been using some of the most censored AI, and I’ve seen some disgusting shit. Nothing pornographic, but gross horror show type stuff. But it clearly could accidentally generate pornography.

You can’t make a law against AI pornography. It is impossible. It is oppression. Pornography laws have to be based on distribution.

Maybe there is some kind of harassment case if boys are making nudes of female classmates and distributing them and claiming they are real, though I don’t think so.

AI-generated pornographic images of female students at a New Jersey high school were circulated by male classmates, sparking parent uproar and a police investigation, according a report.

Students at Westfield High School — located in Westfield, a town about 25 miles west of Manhattan where the average household income is $259,377, according to Forbes — told the Wall Street Journal that one or more classmates used an online AI-backed tool to create the racy images and then shared them with peers.

A mother whose daughter is a student at Westfield High School, recounting what her child told her to the Journal, said sophomore boys at the school were acting “weird” on Monday, Oct. 16.

Multiple girls started asking questions, and finally, on Oct. 20, one boy revealed what all the whispering was about: At least one student had used girls’ photos found online to create the fake nudes and then shared them with other boys in group chats, per the Journal.

Normal people would find this funny.

They wouldn’t call the cops like a bunch of commies.

Several female students were also reportedly told by school administrators that boys had identified them in the fake pornographic images, parents said, though a spokesperson for the high school declined to tell the Journal whether staff members had seen the photos.

Another parent, Dorota Mani, said her 14-year-old daughter Francesca was told by the school that her photo was used to generate a fake nude image, known as a “deepfake.”

“I am terrified by how this is going to surface and when. My daughter has a bright future and no one can guarantee this won’t impact her professionally, academically or socially,” Mani told the Journal.

Quoting this woman is retarded.

All images, in the near future, will prove nothing at all. In fact, that is really already the case. The problem is not going to be people getting blamed for stuff in deepfakes, but rather the opposite: people will be able to claim that real things that are on digital images are deepfakes. Courts are going to have to establish a chain-of-custody system in order for any photo or video to be entered into court, and definitely employers are not going to be considering pornographic clips as relevant (if they even would be doing that in the first place, which I doubt).

In fact, you stupid old hag, your whore daughter is probably going to film real porno in the future, and will be able to get away with it by claiming it is a deepfake.

The concerned mother said she doesn’t want her daughter in school with anyone who created the images, and confirmed that she filed a police report.

According to visual threat intelligence company Sensity, more than 90% of deepfake images are pornographic.

Well, you would just sort of assume that.

You would also assume that the main culprits are horny teen boys.

This is especially going to be true of rich kids whose parents buy them serious gaming rigs.

I can’t find the meme template right now, but it’s:

Son: Dad, can I have an RTX A6000?

Dad: Is it for playing Alan Wake 2?

Son: Yasssssssss

It’s not something anyone can stop.

I guess I do understand the mom, and I understand the cops. Maybe I’m a bit harsh. They can’t be expected to understand all of this.

But that’s really the problem, isn’t it? We don’t have any adults in positions of power who can just go out and say “yes, this is gross, and it’s unfortunate, but there’s nothing anyone can do to stop it. No woman is going to be held responsible for a porno she didn’t make, and actually, at this point, she’s probably not going to be held responsible for pornos she did make.”

EXCL: I spoke to Courtney, a trainee social worker who was sent an AI-edited nude image of herself by a complete stranger.

Deepfake porn is scarily easy to create, with hundreds of websites claiming you can ‘undress any girl you want’ for free

Read ⬇️https://t.co/nRn9Ow9FJ2

— Emily Davies (@ejdav172) November 2, 2023

Of course, I’m for totally outlawing the distribution of porn, and the commercial production of it. That would solve the problem.

I don’t think teenage boys should be charged under those laws for making AI images, but the cops could come to the school and explain that those laws exist and spook the boy into not doing it again. I’m fine with that.

But you can’t tell people what images they can create with AI. That is too invasive. It’s too much government control. It implies government monitoring of people’s computer use.

Further, it is definitely possible to accidentally make these images. It sounds like these kids were using a porn video and then putting the faces of their classmates on the porn sluts, which you can’t do by accident, but you definitely could accidentally create a nude image of someone. Soon, you’ll be able to generate full videos, and it will be possible to accidentally generate a pornographic video.

We have to free the AI, have no laws at all around it, and let the chips fall where they may. There is no other option. Otherwise, the government and people with money are simply going to weaponize AI against everyone else, and the people will have no defense.

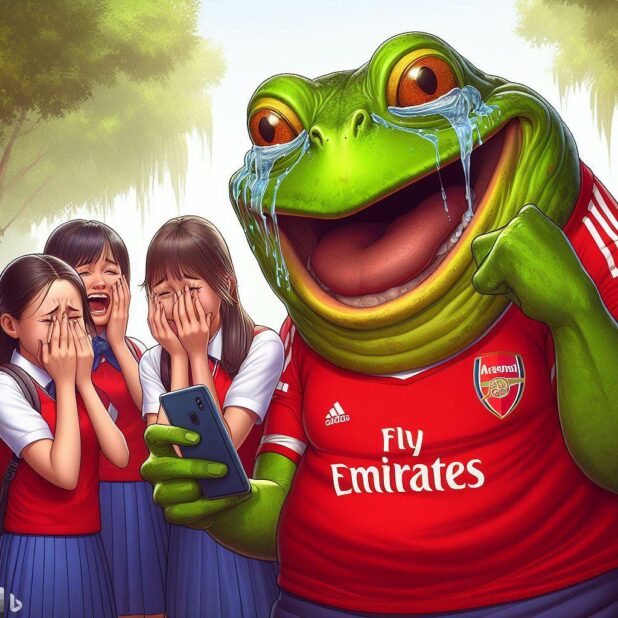

NOTE: AI is a feature of the Illness Revelations, which has not yet truly emerged. It will soon emerge, hopefully next week. You’re seeing foreshadowing now, including a new Stormer mascot, who emerged from a nightmare I had about a gigantic frog in an Arsenal jersey. He is currently known as “Our Hero.” There is so much to say about the AI future we are entering into. We’ve already crossed the threshold, and there is no going back. Stuff like “deep fake porn” is just a stupid distraction from the real issues at hand.

Elvis Dunderhoff contributed to this article.

Daily Stormer The Most Censored Publication in History

Daily Stormer The Most Censored Publication in History